DeepSeek

Model ID: deepseek-v4-flash

DeepSeek V4 Flash

- Total Context

- 256K

- Max Output

- 64K

- Std Input Price

- Request access

- Std Output Price

- Request access

- Batch Input Price

- Request access

- Batch Output Price

- Request access

One OpenAI-compatible control plane for managed and open models with routing policy cost controls hybrid fallback signed audit trails and batch lanes built for production traffic

OpenAI-compatible API with BatchIn Managed routes, audit traces, and private-beta access controls.

Create an account, copy your API key, and apply an invite code for private-beta or cohort access if you have one

batchin-sk-xxxx...Using OpenAI SDK? Just change one line of code

client = OpenAI( base_url="https://batchin-api.onrender.com/v1", api_key="YOUR_KEY" )

Use BatchIn Managed, Hybrid fallback, Dedicated Capacity, Private Cluster, Data-residency, and No-cloud mode paths without changing SDKs.

OpenAI-compatible by default. Validate in Playground first, then move repeatable traffic into Batch

from openai import OpenAI

client = OpenAI(

base_url="https://batchin-api.onrender.com/v1",

api_key="YOUR_BATCHIN_KEY"

)

response = client.chat.completions.create(

model="glm-5.1",

messages=[{"role": "user", "content": "Summarize this meeting"}]

)Pick production-ready model routes with cost, latency, and audit controls visible from one catalog.

DeepSeek

Model ID: deepseek-v4-flash

Qwen / Alibaba

Model ID: qwen3-next-80b-a3b

Moonshot AI

Model ID: kimi-k2-6

DeepSeek

Model ID: deepseek-v3-2

OpenAI OSS

Model ID: gpt-oss-120b

Qwen / Alibaba

Model ID: qwen3-coder-30b-a3b

Estimate cost by model and usage; use routing policy for latency and fallback control.

Public site shows BatchIn-only cost estimates

Because competitor coverage is not verifiable for every route, the homepage no longer shows exact savings percentages. Use model detail pages for verified pricing notes, pass-through labels, and Asia / Batch lanes

BatchIn

$15.40

Shown in USD

Model pricing note

Standard relay $0.28/M. Public site shows Asia public floor and batch lanes. Asia Shared and Asia Dedicated available on request.

Pricing lane

Shows public Batch / Asia / pass-through lanes where available

Monthly BatchIn estimate

The homepage calculator only shows public BatchIn cost estimates and does not show unverified competitor savings percentages

Reserve high-performance capacity monthly for stable high-load inference and training

Build differentiated products around managed inference, batch processing, audit traces, multimodal workflows, and dedicated capacity

Build research, red-team, creative, and workflow agents with route policy, retention boundaries, and audit traces

Process millions of documents with 3-tier priority scheduling and a fill path optimized for the lowest-cost offline throughput

Verify outputs, preserve request evidence, replay decisions, and give enterprise teams an audit-ready trail for model-powered workflows

Cover text, code, image, video, speech, and embeddings from one platform instead of stitching together multiple backends

Build verifiable checkout, top-up, billing ledger, and receipt flows around USDC and Stripe

Reserve dedicated capacity for steady high-load inference while your team keeps the runtime, model stack, and operating rules

Route production AI traffic with cost, latency, and audit control.

Tell us where you need BatchIn Managed, Hybrid fallback, Dedicated Capacity, Private Cluster, Data-residency, No-cloud mode, or Regional Deployment.

Access planning

The customer preview does not use a homepage submission form yet. Email your team, model needs, and whether you need relay, BYOK, private capacity, or VaaS, and we will respond with the appropriate access path.

Helpful details to include

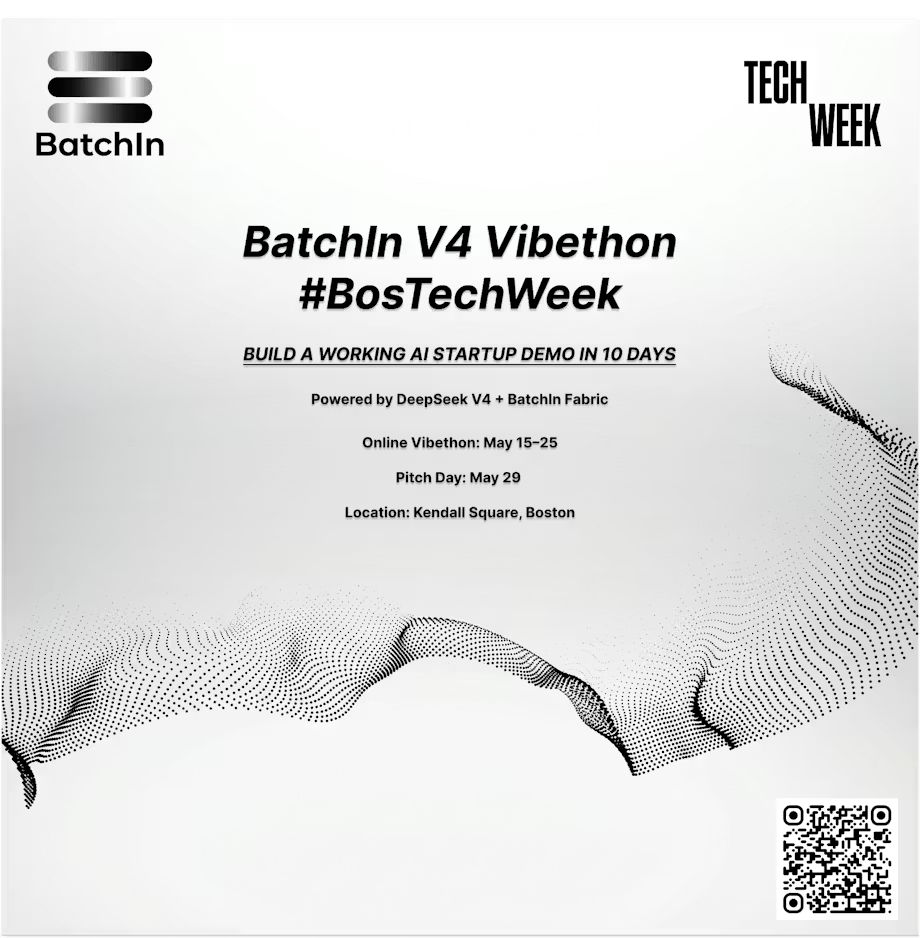

Join private-beta cohorts, hackathons, webinars, and build challenges

Build with DeepSeek V4 and Pitch at Boston Tech Week with BatchIn-managed registration and Demo Day RSVP on Partiful

Build with GLM-5.1 (SWE-Bench Pro #1, 8-hour autonomous coding) and DeepSeek V4 (1T MoE, when available) through the BatchIn API. 3 days, 50–100 builders, $2,000 in API credits.

Learn how to cut your AI inference costs in half using BatchIn's Batch API. Live demo, real customer case studies, and Q&A.